API Gateways vs Observability Platforms: Clearing Up the AI Tooling Confusion

If you’re building AI applications, you’ve probably encountered two categories of tools that seem to overlap: AI Gateways (like Apigee, LiteLLM, or Kong AI Gateway) and Observability Platforms (like Langfuse, LangSmith, or Helicone). Both involve SDKs, both capture LLM calls, and both show you dashboards. So what’s the actual difference?

How Developers Build AI Apps

A typical AI application flow looks like this:

Your application code constructs a prompt

An SDK or HTTP client sends that prompt to an LLM provider

The LLM returns a response

Your app processes that response (maybe chains to another call)

Eventually, a result reaches your user

At each step, you need different things: routing, cost control, retries, and caching at the transport layer; tracing, evaluation, and debugging at the development layer.

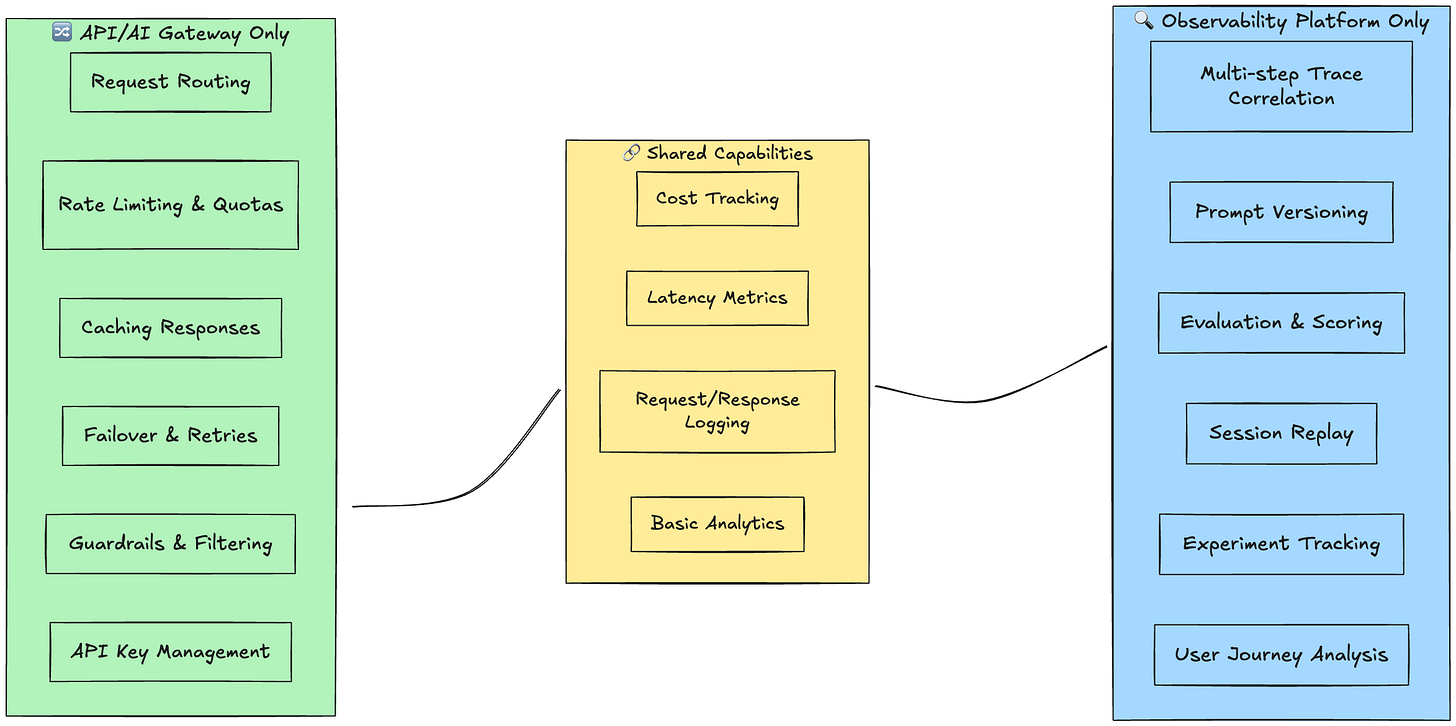

The API Gateway: Traffic Control

An AI Gateway sits in the request path. Every LLM call flows through it.

What it does:

Routes requests to different models/providers

Enforces rate limits and quotas

Caches responses to reduce costs

Handles retries and failover

Applies guardrails and content filtering

Manages API keys centrally

SDK integration pattern:

# Gateway as a proxy - you point your SDK at the gateway

client = OpenAI(

base_url=”https://your-gateway.com/v1”,

api_key=”your-gateway-key”

)

The gateway is infrastructure. It doesn’t know what your prompt means or whether your chain worked correctly. It knows: request came in, went to Claude, took 2.3 seconds, cost $0.04, returned 200 OK.

The Observability Platform: Understanding Your AI

Langfuse and similar platforms sit alongside your application. They receive telemetry about your calls.

What it does:

Traces multi-step chains and agents

Captures prompt/response pairs for debugging

Enables evaluation and scoring of outputs

Tracks experiments and prompt versions

Provides session replay and user journey analysis

SDK integration pattern:

# Observability wraps or instruments your calls

from langfuse.openai import OpenAI

client = OpenAI() # Langfuse patches/wraps the SDK

# Or explicit tracing

with langfuse.trace(name=”my-chain”) as trace:

trace.span(name=”retrieval”, input=query)

# ...

The platform understands semantics. It knows this was step 3 of a 5-step agent, the user asked about refunds, and your evaluator scored the response 0.7 for relevance.

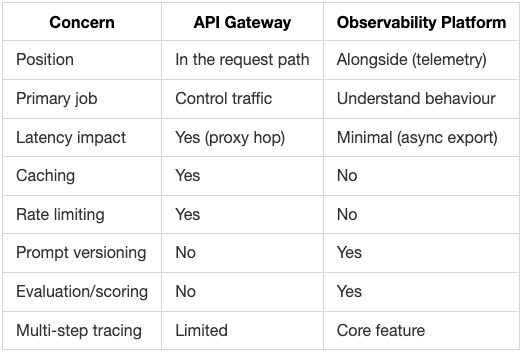

Where They Differ

Where They Intersect

The confusion arises because both capture similar data: prompts, responses, latency, tokens, and costs. Some products blur the line intentionally (Helicone is gateway-ish but observability-focused; Portkey has both, Apigee and Kong are gateways).

The overlap:

Cost tracking - both can tell you spend

Latency metrics - both see response times

Basic logging - both store request/response pairs

A visual reference

A Practical Mental Model

Think of it like traditional web applications:

API Gateway = Apigee, Kong, or AWS API Gateway → routing, auth, rate limiting

Observability Platform = Datadog APM, Jaeger → tracing, debugging, understanding

Now apply that same split to AI applications:

AI Gateway = Portkey, Apigee, LiteLLM, Kong AI Gateway → routing, caching, failover, auth, guardrails. content filtering, semantic caching.

AI Observability = Langfuse, LangSmith, Helicone → tracing chains, evaluating outputs, debugging prompts

You probably need both layers in each world.

When to Use What

Start with a gateway if:

You’re calling multiple LLM providers

You need cost controls and caching

You want centralised key management

Start with observability if:

You’re building chains or agents

You need to debug why outputs are poor

You’re iterating on prompts and need versioning

Use both when:

You’re in production with real users

You need both traffic control AND deep understanding

Chances are that if you are in an enterprise, you belong to either the AI Platform team or the API Platform team. In that case, reach out to your partner platform team to understand the capabilities on offer and see “how” things work.

Not sure if your current tooling stack has gaps or overlaps? I offer a quick API/AI platform assessment to help teams understand what they have, what they need, and where to invest next. Let’s talk. Most conversations start with a 15-minute call.